Inclusion of reasoning "chains of idea" (CoT) in the design output considerably enhances its quality, but it increases inference expense.

- Distillation transfers reasoning knowledge from an expensive instructor model to a more cost-efficient trainee, lowering overall reasoning cost.

- DeepSeek R1 can produce detailed CoT, making it an exceptional instructor design.

- Synthetic data produced by DeepSeek R1 might outperform information produced by human experts.

Introduction

The current release of DeepSeek R1 has taken the AI community by storm, offering efficiency on par with leading frontier models-such as OpenAI's o1-at a fraction of the expense. Still, R1 can be pricey for use cases with high traffic or low latency requirements.

DeepSeek R1's strength lies in its explicit detailed reasoning. Before producing a last answer, it develops an internal "chain of thought" (CoT) to systematically reason through each issue. This process is a kind of test-time computation, permitting the model to dynamically allocate more calculate to intricate problems. However, these extended thinking series usually increase inference cost.

Distillation

Distillation is a technique for moving understanding from a big, more powerful instructor model to a smaller, garagesale.es more cost-effective trainee model. According to the DeepSeek R1 paper, R1 is extremely efficient in this instructor function. Its detailed CoT series direct the trainee model to break down complex tasks into smaller sized, more workable actions.

Comparing Distillation to Human-Labeled Data

Although fine-tuning with human-labeled information can produce customized designs, collecting both final responses and their corresponding thinking steps is pricey. Distillation scales more quickly: rather than relying on human annotations, the teacher design immediately generates the training data for addsub.wiki the trainee.

A Side Note on Terminology

The term "distillation" can refer to different techniques:

Distribution Distillation Aligns the trainee design's output token distribution with the teacher's utilizing Kullback-Leibler divergence (KL-divergence).

Works best when both models share the same architecture, tokenizer, and pre-training information.

Data Distillation Uses the teacher model to produce conclusions for a set of triggers.

Fine-tunes the trainee model utilizing a basic cross-entropy loss on these produced outputs, skipping the KL-divergence term.

Allows the teacher and trainee to be various model families and tokenizers (though if the teacher uses specialized tokens like __, it can be advantageous for both models to acknowledge them).

In this post, we concentrate on the data distillation since it supports a broader range of student-teacher pairs.

Data Generation

Training information is frequently a traffic jam in model development. In a current post (add link), forum.batman.gainedge.org we explored how to create labels by combining model output with a verification function. Distillation takes a different technique, using an instructor design to synthesize missing out on conclusions.

DeepSeek R1 stands apart since it not just offers last responses but also exposes its detailed chain of thought-unlike other thinking designs that keep this internal procedure concealed. If your dataset consists of ground reality answers, galgbtqhistoryproject.org you can identify top quality artificial CoTs through rejection tasting, picking only the best chains to additional enhance your fine-tuned model. Rejection tasting can eliminate inaccurate data examples either by comparing the created information against ground truth labels or by using a user-defined validation function. From the user interface point of view, bphomesteading.com the recognition function resembles the proven reward function used by value-model-free RL approaches like these explained in our recent article.

Case Study: GSM8K

GSM8K (Elementary School Math 8K) is a dataset of 8.5 K varied grade-school mathematics word problems. Each data point includes:

1. A problem description.

2. A human specialist's chain of idea.

3. The last response.

We expanded this dataset by adding:

Synthetic R1 reasoning, i.e., the CoT produced by DeepSeek R1.

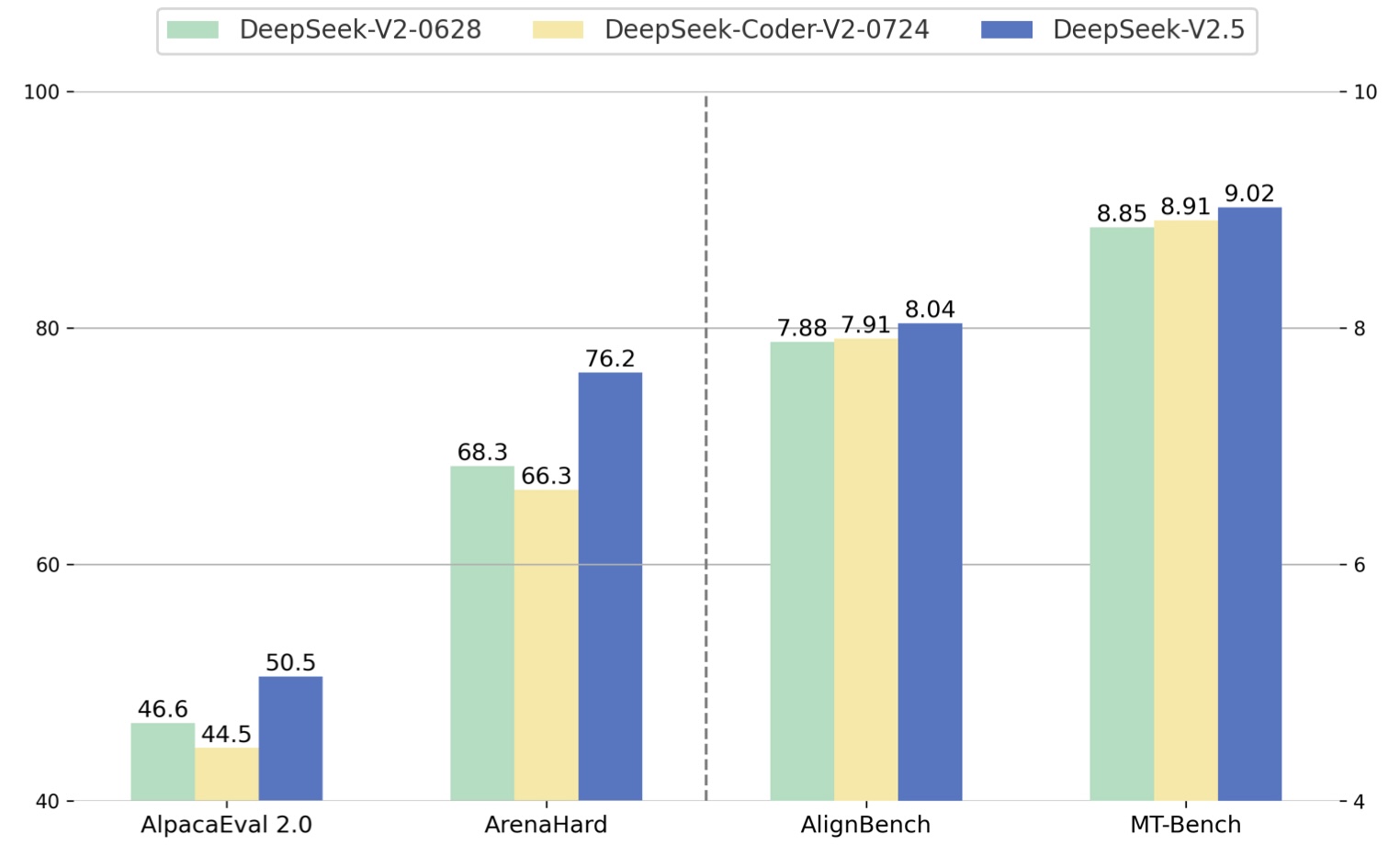

Then, we fine-tuned 3 versions of the model (using LoRA on llama-3.1 -8 B-instruct), each with different training targets:

Direct Answer Only: Generate the final response without showing thinking.

Human Expert CoT: Generate the final response along with a thinking chain looking like the human professional's.

Synthetic R1 CoT: Generate the final response together with DeepSeek R1's synthetic reasoning chain.

The table below summarizes average precision and reasoning length:

- Note: The accuracy for the 5-shot standard may vary from numbers reported in other places due to various examination setups. The crucial focus is on comparing relative efficiency across distillation approaches, not on beating other designs.

From this study, artificial reasoning CoTs from DeepSeek R1 appear superior to human-expert CoTs in boosting performance, albeit with a higher reasoning expense due to their longer length.

Fireworks AI Inference and Fine-Tuning Platform

DeepSeek R1 is available on the Fireworks AI platform. An user-friendly distillation interface will quickly become part of FireOptimizer. If you require earlier gain access to, wiki.die-karte-bitte.de please get in touch to check out options.

Conclusions

By incorporating reasoning-based data through distillation, companies can considerably improve model efficiency without bearing the full problem of human-annotated datasets. DeepSeek R1's ability to produce long, high-quality reasoning chains makes it a powerful teacher model-showing that, in many cases, the maker might just out-teach the human.